Most creative operations leads are currently making a fundamental measurement error. They are equating generation speed with production velocity. In reality, being able to generate 400 variations of a campaign hero image in ten minutes doesn’t move the needle if it takes the creative director four hours to sort through the noise, identify hallucinations, and verify brand compliance. This is the velocity paradox: as the “making” phase of creative work shrinks toward zero, the “evaluating” phase expands to fill the void.

For teams building repeatable asset pipelines, the goal isn’t just to produce more; it’s to reduce the friction between an initial prompt and a deliverable that meets professional standards. This requires a shift from viewing AI as a “magic button” to treating it as a high-throughput engine that requires a new type of operational governance. We are moving away from a linear labor model—where hours spent directly correlated with quality—and toward a curation-first model that prioritizes semantic refinement over manual retouching.

Table of Contents

The Volume-to-Refinement Ratio: Redefining Production Velocity

Traditional creative production assumes a fairly predictable relationship between labor hours and asset volume. If a designer needs to create twenty social media banners, the time required is the sum of layout, asset selection, and manual adjustment. When you introduce generative tools, this linear scale breaks. You can now produce those twenty banners in seconds, but the cognitive load of selection has increased exponentially.

Velocity, in an AI-augmented environment, is no longer measured by how fast a designer can push pixels. Instead, it is measured by the speed of the feedback-to-adjustment loop. If a creative lead identifies a lighting issue in an AI-generated image, the old workflow involved a ticket, a manual adjustment in a raster editor, and a re-export. A modern workflow uses an AI Image Editor to modify the asset semantically. This shifts the burden from the hand to the eye.

However, we must be realistic about the “selection burden.” When options are infinite, decision fatigue becomes a legitimate production bottleneck. Teams that fail to implement strict curation filters or “automated gates” often find themselves paralyzed by the sheer volume of drafts. The bottleneck hasn’t disappeared; it has simply moved from the artist’s desk to the director’s screen.

From Pixel Pushing to Semantic Editing with an AI Image Editor

The technical nature of editing is undergoing a fundamental transformation. For decades, “editing” meant cloning, healing, and masking at a granular level. If you wanted to remove an object or change the depth of field, you were manipulating individual pixels or groups of pixels. Using an AI Image Editor changes this to a semantic process. You are no longer “cloning out a person”; you are instructing the model to “re-imagine the background as if the person were never there.”

This shift requires a different skill set from junior designers. The value is no longer in their ability to use a pen tool with precision, but in their ability to describe visual intent and manage depth-aware editing. For instance, replacing a background used to involve painstaking masking of hair and translucent objects. Now, depth-aware AI models can segment a foreground in seconds.

The practical evidence suggests that this collapses the man-hours required for complex retouching by roughly 70% to 80%. But here is a moment of uncertainty: we do not yet have a standard for how “prompt-based” edits should be documented for long-term project handoffs. If a designer uses a generative fill to fix a sleeve, that edit is often “baked in” without the non-destructive layers that traditional art directors rely on for last-minute pivots.

Breaking the Versioning Cycle: Real-Time Iteration Strategies

The traditional agency model of v1, v2, and v3 is inherently slow because it relies on asynchronous review cycles. You send a file, wait for a comment, and reopen the project. In a high-velocity pipeline, this cycle is the primary source of latency. Collapsing these delays requires tools that allow for “live” refinement during the review itself.

By utilizing a professional-grade AI Photo Editor, teams can perform high-level adjustments—such as lighting shifts, color grading, or object replacement—while the creative lead is still on the call. This effectively turns the review session into the production session. Instead of taking notes on how to change a mood from “somber” to “energetic,” the operator can adjust the latent space of the image in real-time.

However, there is a risk of “infinite iteration.” Because it is so easy to generate another version, teams often fall into the trap of over-polishing or chasing a subjective “perfection” that doesn’t actually improve conversion or brand perception. Without clear guardrails—such as a hard limit on the number of AI-driven variations allowed per asset—the pipeline can stall on minor variations that a human editor would have ignored.

Benchmarking Quality: Where Automated Workflows Stumble

We must address the “Last 10% Problem.” AI excels at the first 90% of a project. It can generate a stunning landscape, a realistic human face, or a complex architectural interior with ease. But professional delivery often requires a level of brand-specific nuance that current models frequently miss.

Whether it is the exact kerning of a proprietary font or the specific Pantone shade of a corporate logo, the AI Photo Editor still requires a human “finisher.” There is a visible gap in current technology regarding character and object persistence across different angles or lighting conditions. If you need the same model to appear in twelve different lifestyle shots, the subtle “drift” in facial features between generations can render the entire set useless for a cohesive campaign.

Furthermore, we should maintain a healthy skepticism regarding “automated” consistency. While tools can match styles, they often fail on spatial logic. A table might have five legs, or a shadow might fall in the wrong direction. These “hallucinations” require a manual review phase that cannot be skipped, regardless of how advanced the underlying model claims to be.

Operationalizing PicEditor AI: Building a Repeatable Asset Engine

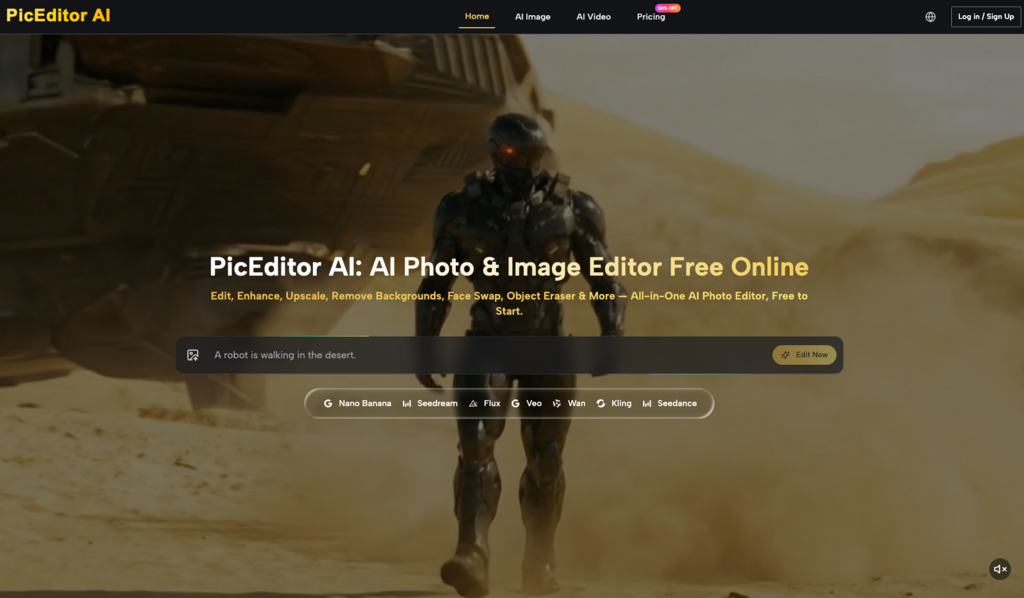

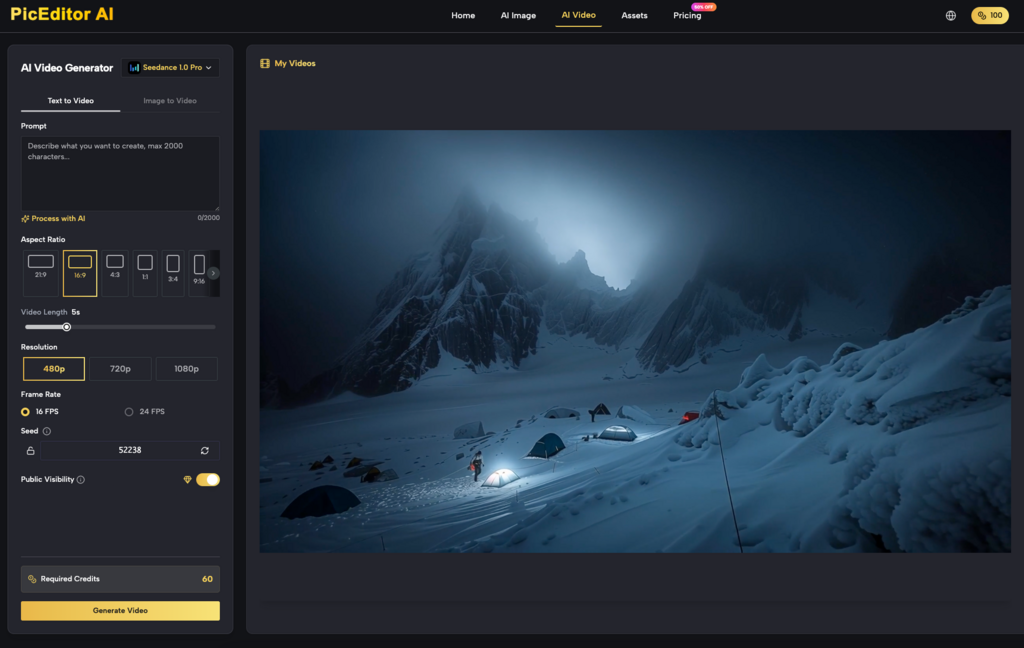

In a professional creative ops framework, the tool should disappear into the workflow. The objective is to reduce “tool-switching latency”—the time lost moving between different platforms for generation, upscaling, and retouching. A platform like PicEditor AI serves as a centralized engine for these tasks, integrating models like Flux for high-fidelity generation and Nano Banana for specific aesthetic outputs.

Standardizing the Pipeline

To make the process repeatable, teams should focus on:

- Prompt Standardization: Creating a library of “brand-vetted” prompts that ensure a consistent aesthetic baseline across decentralized teams.

- Upscale Protocols: Determining exactly when an image should be upscaled to avoid wasting compute resources on drafts that will eventually be discarded.

- Centralized Assets: Using an all-in-one AI Image Editor to handle background removal, face swapping, and object erasure within a single interface, rather than hopping between specialized single-purpose apps.

By standardizing these inputs, an organization can ensure that a junior designer in one region produces work that is stylistically indistinguishable from a senior designer in another. This isn’t about replacing creativity; it’s about industrializing the production of high-volume assets so that creative energy can be saved for the high-impact conceptual work.

The Uncharted Territory of AI-Driven Brand Governance

As we move toward a future where the majority of marketing assets are AI-generated or AI-modified, we face a significant unknown: the long-term impact on brand identity. When every brand has access to the same high-performance models, there is a risk of aesthetic convergence. If every “luxury” brand uses the same “cinematic, 8k, golden hour” prompt parameters, the visual language of the category begins to feel generic.

There is also the ongoing legal and ethical ambiguity regarding AI-generated derivatives. While the velocity benefits are undeniable, the “creative soul” of a brand—that intangible quality that makes a Nike ad feel like Nike and not Adidas—still requires a human-led refinement strategy.

We cannot yet conclude how these models will evolve to handle complex brand guidelines or if they will ever truly understand “vibe” without human intervention. What we do know is that velocity is achievable today for those willing to restructure their operations around curation, semantic editing, and real-time iteration. The paradox remains: to go faster, you don’t necessarily need a faster computer; you need a faster way to say “no” to the wrong versions.